In this previous post, we described how the Laplace method (or Laplace approximation) allows us to approximate a probability distribution with a Gaussian distribution with suitably chosen parameters. In this post, we show how it can be used to estimate the posterior distribution of the parameters and output of a neural network (NN), or really any prediction function. This post generally follows the exposition in Section 5.7.1 of Bishop (2006) (Reference 1).

Set-up

Assume that we are in the supervised learning setting with i.i.d. data , where

and

. We have a neural network model

which takes in an input vector

and outputs a prediction

. We would like to use the Bayesian framework to learn

. To do that, we need to specify a prior distribution for the weights

, and likelihood function for the data, and then we can turn the proverbial Bayesian crank to get posterior distributions.

Assume the prior distribution of the network weights is normal with some mean and precision matrix

(inverse of covariance matrix):

Assume that the conditional distribution of the target variable given

is normal as well, with mean at the neural network output and some precision parameter

:

Since our data is i.i.d., if we assume the ‘s are fixed, our likelihood function is

Posterior distribution of NN parameters

We now have all the ingredients we need for Bayesian inference. The posterior distribution is proportional to the product of the prior and the likelihood:

Taking logarithms on both sides:

for some constant . If

was linear in

, the RHS is a quadratic expression in

, which implies that

has Gaussian distribution with parameters which we can derive. Unfortunately for NNs and many other prediction models,

is not linear in

.

Instead, we approximate the posterior with Laplace’s approximation. The details of Laplace’s approximation are in this previous post. we omit them here. If we let be the mode of the posterior

, then we can approximate the posterior as

where

Posterior distribution of target variable

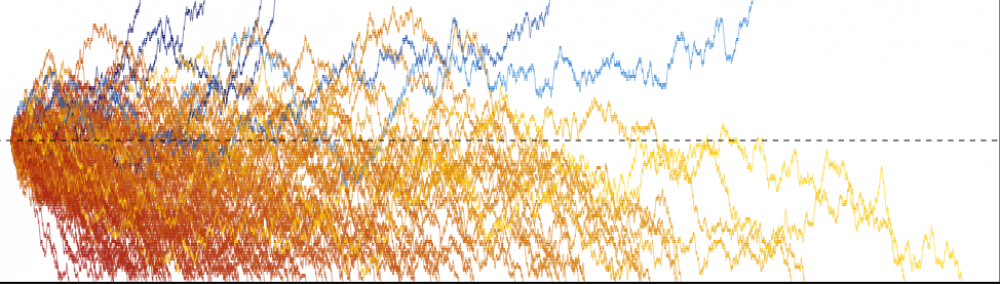

Once we have the posterior distribution of the parameters, we can get the posterior predictive distribution of the target by marginalizing out the parameters:

If the mean of was linear in

, then this would be a linear Gaussian model, and we could use standard results for that model to obtain the posterior predictive distribution (see this previous post). Unfortunately, for NNs this is not the case.

Again we turn to a Taylor approximation to move forward. Assuming we can ignore quadratic and higher order terms,

With this approximation, we have a linear Gaussian model:

Applying the result for the linear Gaussian model,

References:

- Bishop, C. M. (2006). Pattern Recognition and Machine Learning (Section 4.4).